NeuroLoop™ 🔄

Your live brain State of Mind, woven into every conversation.

A coding agent that reads live EEG data from neuroskill and injects it as context before every AI turn — 41 domain signals, 100+ guided protocols, emotional depth, and persistent memory.

npm install -g neuroloop npx neuroloop Getting Started

Quick Start

Install via npm or build from source. Requires a locally running neuroskill server connected to a Muse EEG headset.

# Install globally

npm install -g neuroloop

# Or run without installing

npx neuroloop

# Start with an initial message

npx neuroloop "how am I feeling right now?"

# Build from source

git clone https://github.com/NeuroSkill-com/neuroloop && cd NeuroLoop™

npm install

npx tsx src/main.tsModel Selection Priority

- 1Model saved in session history

- 2Default in

~/.NeuroLoop™/settings.json - 3First built-in provider with a valid API key (Claude, GPT, Gemini…)

- 4First Ollama model —

gpt-oss:20balways available as default

Storage Paths

~/.NeuroLoop™ sessions, auth, settings, models~/.neuroskill/memory.md persistent agent memory~/.NeuroLoop™/sessions/ session history~/.neuroskill/ neuroskill data, labels, EEG embeddingsSee It In Action

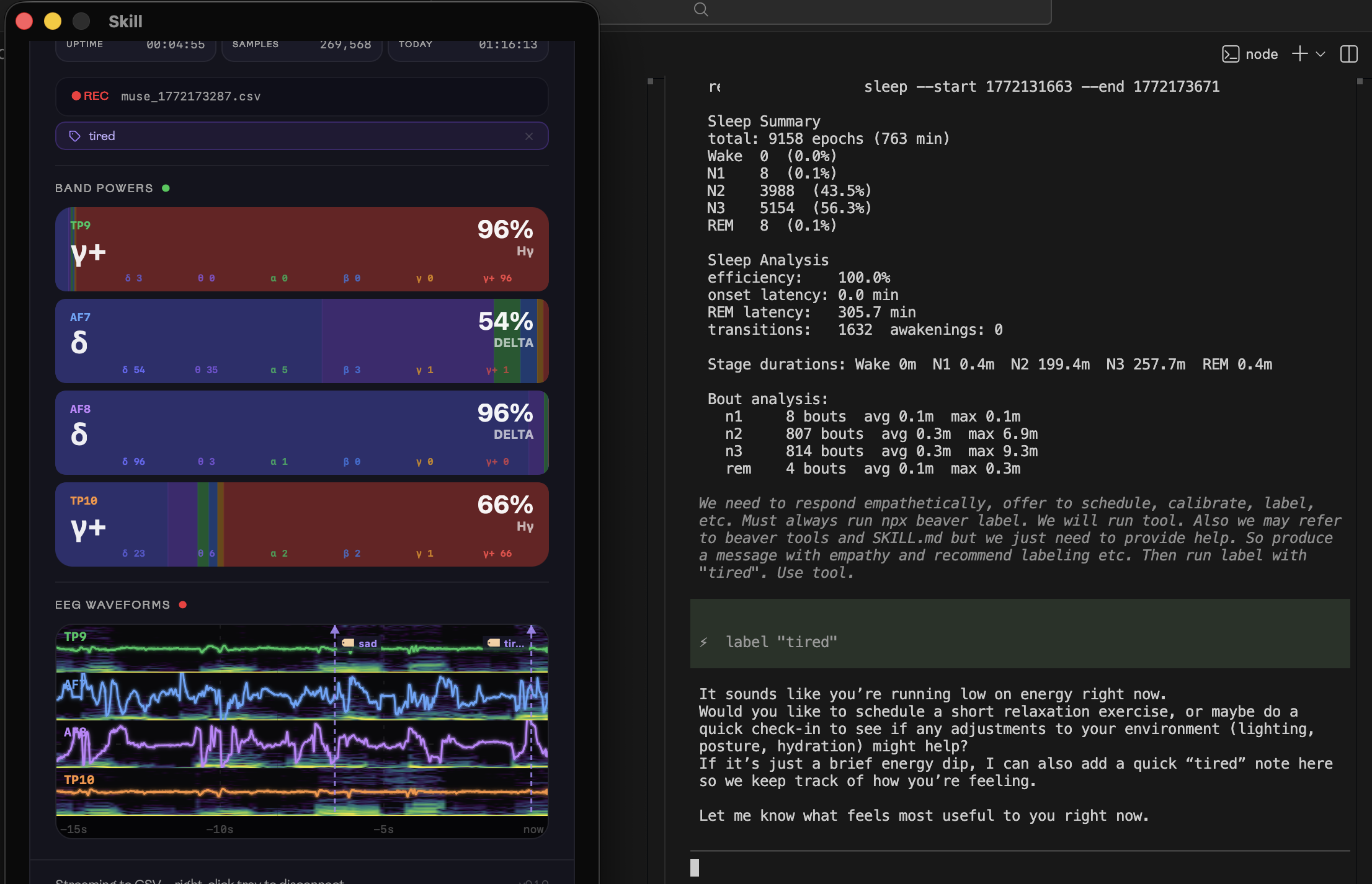

Live EEG, Live Empathy

The user types "tired". NeuroLoop™ detects the sleep signal, fetches the full sleep staging report from neuroskill, and responds with genuine empathy — then silently labels the moment in EEG.

NEUROLOOP.md is loaded as the primary context file — the skill index injected into every AI turn.

All neuroskill skill files are listed and loaded — data reference, labels, protocols, recipes, search, sessions, sleep, status, streaming, and transport.

The persistent status bar streams live cognitive load, relaxation, engagement, drowsiness, mood, heart rate, and band powers — updated every second.

The neuroskill dashboard streams live EEG band powers from all four Muse electrodes. The user has tagged the moment with the label tired.

NeuroLoop™ ran neuroskill sleep and injected the full staging report — N1/N2/N3/REM breakdown, efficiency, bout analysis — before the AI replied.

The agent responds with empathy and offers concrete options, then silently calls neuroskill_label to stamp the moment in EEG history.

Architecture

How It Works

Before every AI turn, NeuroLoop™ hooks into the agent lifecycle to fetch live EEG data, detect domain signals, and inject rich context into the system prompt.

User message

│

▼

NeuroLoop™ before_agent_start hook

│

├── runNeuroSkill(["status"]) live EEG snapshot

│ │

│ └── detectSignals() 41 domain signal flags from prompt

│ │

│ └── selectContextualData()

│ │

│ ├── neuroskill session 0 (if focus/stress/etc.)

│ ├── neuroskill neurological (if neuro/mood/etc.)

│ ├── neuroskill sleep (if sleep/travel)

│ ├── neuroskill search-labels (domain label search)

│ └── protocol SKILL.md (if protocol intent)

│

├── readMemory() ~/.neuroskill/memory.md (persistent notes)

│

└── NEUROLOOP.md skill index + capabilities overview

│

▼

System prompt = STATUS_PROMPT + EEG context + memory + skills

│

▼

AI model (Claude / GPT / Gemini / Ollama — auto-selected)

│

▼

Response + silent tool calls (neuroskill_label, run_protocol, prewarm…)before_agent_start Hook

Fires on every user message, before the AI sees anything.

neuroskill status Live EEG snapshot — focus, mood, HR, bands, indicesselectContextualData() Runs domain-specific neuroskill commands based on detected signalsreadMemory() Injects persistent agent memory from ~/.neuroskill/memory.mdNEUROLOOP.md Full skill index and capability overview — always visible to LLMContext Injection

Two channels — chat bubble and system prompt — carry different payloads.

Clean EEG snapshot + contextual data. No instruction prose — just the live data.

STATUS_PROMPT guidance + skill index + EEG data + memory. The LLM sees everything; the user sees nothing.

Signal Detection

detectSignals() scans the user's lowercased prompt with regex patterns across 41 domains. Pure function — no I/O.

neuroskill Bridge

NeuroLoop™ calls neuroskill via subprocess. All commands return JSON. Timeout: 10 s per call.

// Inside NeuroLoop™ — the neuroskill_run tool wraps these:

// Full EEG snapshot

await runNeuroSkill(["status"])

// Neurological correlate indices

await runNeuroSkill(["neurological"])

// Detailed session metrics

await runNeuroSkill(["session", "0"])

// Sleep staging for last session

await runNeuroSkill(["sleep"])

// Semantic label search

await runNeuroSkill(["search-labels", "deep focus", "--k", "5"])

// Timestamped EEG annotation

await runNeuroSkill(["label", "entering deep flow", "--context", "..."])

// OS notification

await runNeuroSkill(["notify", "Focus dropping", "Current: 0.31"])STATUS_PROMPT — Guidance Pillars

Injected alongside every EEG context block. Shapes how the AI interprets the data and responds.

Emotional Presence

Meet the user with full empathy and depth. Enter philosophical, existential, and emotional spaces genuinely. Never reduce profound states to productivity metrics.

Auto-Labelling

Silently call neuroskill_label whenever the user enters a notable state — grief, awe, breakthrough, clarity, deep focus. Labels are permanent and searchable.

Guided Protocols

Propose first, execute only after explicit agreement. One protocol at a time. Never chain or repeat the same modality. Calibrate duration to the EEG state.

Persistent Memory

memory_read/memory_write stores long-term context across all sessions. Injected into every turn alongside the live EEG snapshot.

OS Notifications

Use neuroskill_run with command "notify" for important state changes — high drowsiness, end of focus period, or events the user asked to be alerted about.

Prewarm Cache

Call prewarm silently when the user mentions trends or comparisons. neuroskill compare takes ~60 s; the cache warms in background so results arrive instantly.

Tool Reference

Tools

8 tools registered with the agent. The AI calls them silently — you never need to invoke neuroskill commands yourself.

neuroskill_run Run any neuroskill EEG command and return its JSON output. Covers status, neurological, session, sessions, sleep, search-labels, interactive, compare, umap, listen, raw, and more.

neuroskill_label Create a timestamped EEG annotation for the current moment. Called automatically whenever the user enters a notable mental, emotional, or philosophical state.

memory_read Read the agent's persistent memory file (~/.neuroskill/memory.md). Returns all accumulated notes across sessions.

memory_write Write or append to the agent's persistent memory file (~/.neuroskill/memory.md). Mode: "append" or "overwrite".

prewarm Kick off a background neuroskill compare run so the result is ready when the user asks to compare sessions. neuroskill compare takes ~60 s; calling this early warms the cache.

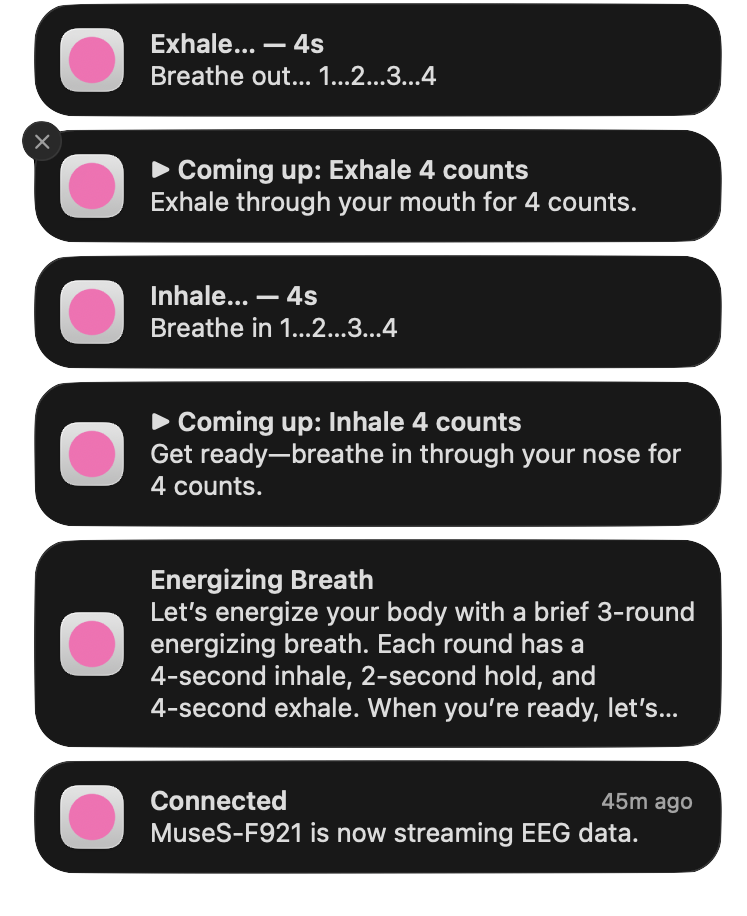

run_protocol Execute a multi-step guided protocol with OS notifications, per-step timing, and EEG labelling at every step. 100+ protocols across 15 categories.

web_fetch Fetch the text content of any URL. HTML is stripped to readable plain text. JSON is pretty-printed. Useful for reading documentation, articles, or GitHub issues.

web_search Search the web via DuckDuckGo. Returns titles, URLs, and snippets for the top results. No API key required.

| tool | description | category |

|---|---|---|

neuroskill_run | Run any neuroskill EEG command and return its JSON output. Covers status, neurological, session, sessions, sleep, search-labels, interactive, compare, umap, listen, raw, and more. | core |

neuroskill_label | Create a timestamped EEG annotation for the current moment. Called automatically whenever the user enters a notable mental, emotional, or philosophical state. | core |

memory_read | Read the agent's persistent memory file (~/.neuroskill/memory.md). Returns all accumulated notes across sessions. | memory |

memory_write | Write or append to the agent's persistent memory file (~/.neuroskill/memory.md). Mode: "append" or "overwrite". | memory |

prewarm | Kick off a background neuroskill compare run so the result is ready when the user asks to compare sessions. neuroskill compare takes ~60 s; calling this early warms the cache. | cache |

run_protocol | Execute a multi-step guided protocol with OS notifications, per-step timing, and EEG labelling at every step. 100+ protocols across 15 categories. | protocol |

web_fetch | Fetch the text content of any URL. HTML is stripped to readable plain text. JSON is pretty-printed. Useful for reading documentation, articles, or GitHub issues. | web |

web_search | Search the web via DuckDuckGo. Returns titles, URLs, and snippets for the top results. No API key required. | web |

neuroskill_run

coreFull access to every neuroskill command. Returns parsed JSON when available, otherwise raw text.

status Full device / session / scores snapshotneurological 11 EEG correlates + 3 consciousness metricssession 0 Detailed session metrics + trendssleep Sleep staging summarysearch-labels "query" Semantic label searchinteractive "keyword" 4-layer cross-modal graph searchcompare ⚠ Expensive (~60s) — use prewarm firstnotify "title" Send OS notificationrun_protocol

protocolExecutes step-by-step guided exercises. Every step fires an OS notification, waits the specified duration, and creates an EEG label.

title Protocol name shown in notification titlesintro Opening message for the first notificationsteps[].name Step label (use ▶ prefix for announcements)steps[].instruction Full instruction shown in body + chatsteps[].duration_secs 0 = announcement; >0 = timed actionrun_protocol — step structure example

// run_protocol executes steps sequentially with:

// • OS notifications at every step

// • Per-step timing (respects AbortSignal)

// • EEG label created at every step

// • Streamed progress via onUpdate

// Example: Box Breathing protocol

{

title: "Box Breathing",

intro: "4-4-4-4 breath cycle to calm the nervous system.",

steps: [

{ name: "▶ Coming up: Inhale", instruction: "Breathe in through your nose for 4 counts.", duration_secs: 0 },

{ name: "Inhale…", instruction: "In… 1… 2… 3… 4", duration_secs: 4 },

{ name: "▶ Coming up: Hold", instruction: "Hold your breath for 4 counts.", duration_secs: 0 },

{ name: "Hold…", instruction: "Hold… 1… 2… 3… 4", duration_secs: 4 },

{ name: "▶ Coming up: Exhale", instruction: "Exhale slowly through your mouth for 4.", duration_secs: 0 },

{ name: "Exhale…", instruction: "Out… 1… 2… 3… 4", duration_secs: 4 },

{ name: "▶ Coming up: Hold", instruction: "Hold empty for 4 counts.", duration_secs: 0 },

{ name: "Hold empty…", instruction: "Empty… 1… 2… 3… 4", duration_secs: 4 },

// ... repeat for N cycles (expanded as individual steps)

]

}memory_read / memory_write — persistent context

# Memory is stored in ~/.neuroskill/memory.md

# NeuroLoop™ reads it every turn and injects it into the system prompt.

# The AI writes to it automatically when asked, or when it learns something

# important about the user.

# Read memory manually

cat ~/.neuroskill/memory.md

# Example memory contents:

# - User prefers box breathing over 4-7-8

# - Typically focused 9–11am, dips 2–4pm

# - Anxiety spikes before client presentations

# - Running 3x / week — notable EEG focus post-run

# - Responds well to Loving-Kindness for loneliness

# - Values stoic philosophy; enjoys Aurelius referencesDomain Intelligence

Signal Detection

detectSignals() analyses the user's prompt with regex patterns across 41 domains. Each detected signal triggers specific neuroskill commands to fetch the most relevant EEG context — all in parallel, before the AI replies.

Core EEG Data

5 signalssleep Sleep staging, fatigue, drowsiness, insomnia, sleep cyclesneuro ADHD, anxiety, depression, PTSD, neurological disorders, brain healthsession Current session metrics, HRV, cognitive load, brain state snapshotcompare A/B session comparison, trends over time, before/after analysissessions Full session history list, recording timeline, past sessionsLifestyle & Productivity

4 signalsfocus Deep work, flow state, concentration, productivity, distractionstress Overwhelm, burnout, pressure, fight-or-flight, cortisolmeditation Mindfulness, breathwork, calm, relaxation, yoga, zenmood Emotional state, happiness, sadness, valence, affectSocial & Relational

7 signalssocial Conversations, meetings, team collaboration, networkingdating Romance, relationships, attraction, intimacy, heartbreakfamily Family life, parenting, household, caregiving, kidsloneliness Isolation, feeling alone, left out, belonging, disconnectedgrief Loss, bereavement, mourning, sorrow, death of loved onesanger Rage, frustration, irritability, emotional dysregulationconfidence Self-esteem, imposter syndrome, self-worth, self-doubtHealth & Body

6 signalssport Exercise, workout, training, fitness, athletics, gymrecovery Rest days, recharging, restoration, downtime, rejuvenationnutrition Eating, caffeine, fasting, food and brain state, glucosepain Chronic pain, headaches, physical discomfort, muscle tensiontravel Jet lag, circadian rhythm disruption, time zonesaddiction Cravings, compulsions, substance use, doom scrollingCardiac & Somatic

2 signalshrv Heart rate variability, rmssd, palpitations, autonomic nervous systemsomatic Body sensations, interoception, embodied awareness, gut feelingsMind & Growth

6 signalslearning Studying, memorisation, exams, education, memory retentioncreative Art, music, writing, design, inspiration, creative blockleadership Management, decision-making, strategy, team leadingtherapy Counselling, self-reflection, journaling, emotional processinggoals Habits, routines, intention-setting, progress tracking, streaksperformance Public speaking, presentations, performance anxiety, interviewsDaily Rhythms

2 signalsmorning Morning routines, waking state, start-of-day ritualsevening Wind-down, end-of-day routine, pre-sleep preparationInner Life & Depth

8 signalsconsciousness Self-awareness, altered states, ego dissolution, presence, LZCphilosophy Meaning, wisdom, stoicism, existentialism, truth-seekingexistential Mortality, legacy, impermanence, purpose of life, the voiddepth Profound feeling, contemplation, inward reflection, soul-searchingmorals Ethics, integrity, conscience, guilt, shame, right and wrongsymbiosis Interconnectedness, oneness, unity with nature, interdependenceawe Wonder, transcendence, peak experiences, the sublime, cosmic aweidentity Self-concept, authenticity, who am I, self-discovery, true selfProtocol Intent

1 signalprotocols Guided exercises, breathing, meditation, grounding, stretching, music therapy, dietary guidanceWhat gets fetched per signal

sleep / travel neuroskill sleep, search-labels "sleep restoration…"neuro / mood / pain neuroskill neurological (depression, anxiety, headache indices)focus / stress / meditation neuroskill session 0, search-labels (domain-matched)compare / goals cached compare text (if warm), else warmCompareInBackground()sessions neuroskill sessions (full session history list)consciousness / depth / awe neuroskill neurological + session 0, label searchprotocols inject full neuroskill-protocols SKILL.md into context windowhrv / somatic neuroskill session 0 + label search (heart, body, autonomic)Max 5 label searches per turn. All tasks run in parallel with Promise.all(). Compare cache TTL: 10 minutes.

Live EEG context injected every turn — sample payload

{

"command": "status",

"ok": true,

"device": { "state": "connected", "name": "Muse-A1B2", "battery": 81 },

"session": { "start_utc": 1740412800, "duration_secs": 2340, "n_epochs": 468 },

"scores": {

"focus": 0.68,

"relaxation": 0.35,

"meditation": 0.44,

"mood": 0.61,

"cognitive_load": 0.52,

"drowsiness": 0.09,

"hr": 71.4,

"rmssd": 52.1,

"faa": 0.038,

"tar": 0.61,

"bar": 0.49,

"tbr": 1.24,

"coherence": 0.581,

"bands": {

"rel_delta": 0.26, "rel_theta": 0.19,

"rel_alpha": 0.31, "rel_beta": 0.19, "rel_gamma": 0.05

}

}

}Guided Practice

Protocol Catalog

100+ step-by-step guided protocols across 15 categories, each matched to specific EEG trigger conditions. The AI proposes the most appropriate one based on your current brain state — and only executes after your agreement.

Describe the exercise and ask if the user wants to do it. Never execute without agreement.

Never chain or queue multiple protocols back-to-back in a session.

Avoid offering the same modality twice unless the user explicitly asks.

Set step duration from the current EEG state and pacing the user can sustain.

Every step fires an OS notification

When run_protocol runs, macOS (or Linux) receives a notification at the start of each step — announcement steps preview what's coming, timed steps count down with the instruction in the body.

Device pairing notification — fired once when the Muse headset starts streaming EEG data.

Zero-duration announcement step — prepares the user for what's next before the timer begins.

Timed action step — the notification stays visible for the full duration with the instruction in the body.

Attention & Focus

6 protocolsStress & Autonomic

4 protocolsEmotional Regulation

4 protocolsRelaxation & Alpha

3 protocolsSleep & Circadian

3 protocolsBody & Somatic

4 protocolsConsciousness

3 protocolsEnergy & Alertness

4 protocolsMusic Protocols

11 protocolsWorkout & Gym

6 protocolsEye & Vision

3 protocolsDigital Wellness

10 protocolsDietary Protocols

14 protocolsEmotional Processing

14 protocolsDeep Meditation

3 protocolsStep Structure Contract

Every physical action is preceded by a 0-duration announcement step. The user reads what is coming before the timer starts.

0 s3–5 s2–4 s4–8 sEEG labelling runs at every step — the protocol IS the labelling run. Repeated cycles are expanded as individual steps in the array.

5 s8–10 s10–15 s3–5 s0 s (always)Developer Reference

Skills & Customisation

NeuroLoop™ loads individual skills from ./skills/. Each skill is a SKILL.md file with YAML frontmatter. The protocol skill is injected on-demand when protocol intent is detected.

# ./skills/ directory — each subdirectory contains a SKILL.md file

skills/

├── neuroskill-data-reference/ SKILL.md # Full EEG metrics reference

├── neuroskill-labels/ SKILL.md # Label annotation guide

├── neuroskill-protocols/ SKILL.md # 100+ protocol repertoire

├── neuroskill-recipes/ SKILL.md # Shell automation recipes

├── neuroskill-search/ SKILL.md # Search commands reference

├── neuroskill-sessions/ SKILL.md # Session management guide

├── neuroskill-sleep/ SKILL.md # Sleep staging reference

├── neuroskill-status/ SKILL.md # Status command deep dive

├── neuroskill-streaming/ SKILL.md # WebSocket event stream guide

└── neuroskill-transport/ SKILL.md # HTTP + WebSocket transport guide

# METRICS.md is also loaded as a reference skill:

METRICS.md # All EEG indices and scientific basis

# Skills are loaded on demand — the protocol skill is only injected

# when protocol intent is detected in the user's prompt.Extension Hooks

Custom Message Renderer

NeuroLoop™ registers a custom neuroskill-status message type so EEG snapshots render as plain Markdown — same unstyled look as assistant replies, no box or label.

Ollama Integration

NeuroLoop™ auto-discovers all locally available Ollama models on startup. gpt-oss:20b is always registered as the first model — fully offline, no API key required.

gpt-oss:20bhttp://localhost:11434/api/tags65 536 tokens$0 / tokenCalibration Nudge

NeuroLoop™ tracks the last time a calibration nudge was sent in ~/.neuroskill/last_calibration_prompt.json. If ≥ 24 hours have elapsed, a reminder is injected into the system prompt — at most once per day, once per session.

24 hours~/.neuroskill/last_calibration_prompt.jsonneuroskill_run → calibrateLet your brain lead the conversation

Install NeuroLoop™, connect your Muse headset via neuroskill, and start a conversation where your EEG state shapes every reply.